Poker Neural Network Example

DeepStack, one of many recent computers to face off against human beings, defeated 11 professional poker players in heads-up no-limit hold’em, according to a study published in Science this month.

Of the 11 players, DeepStack defeated 10 of them in December 2016 by statistically significant margins after the study authors had the computer undergo deep learning training to teach the bot to develop poker intuition for any situation.

The computer looked up two copies of the same network in its neural network, namely for the first three shared cards and then again for the final two, trained on 10,000 randomly drawn poker games, reported Ars Technica.

The researchers recruited 33 players through the International Federation of Poker.

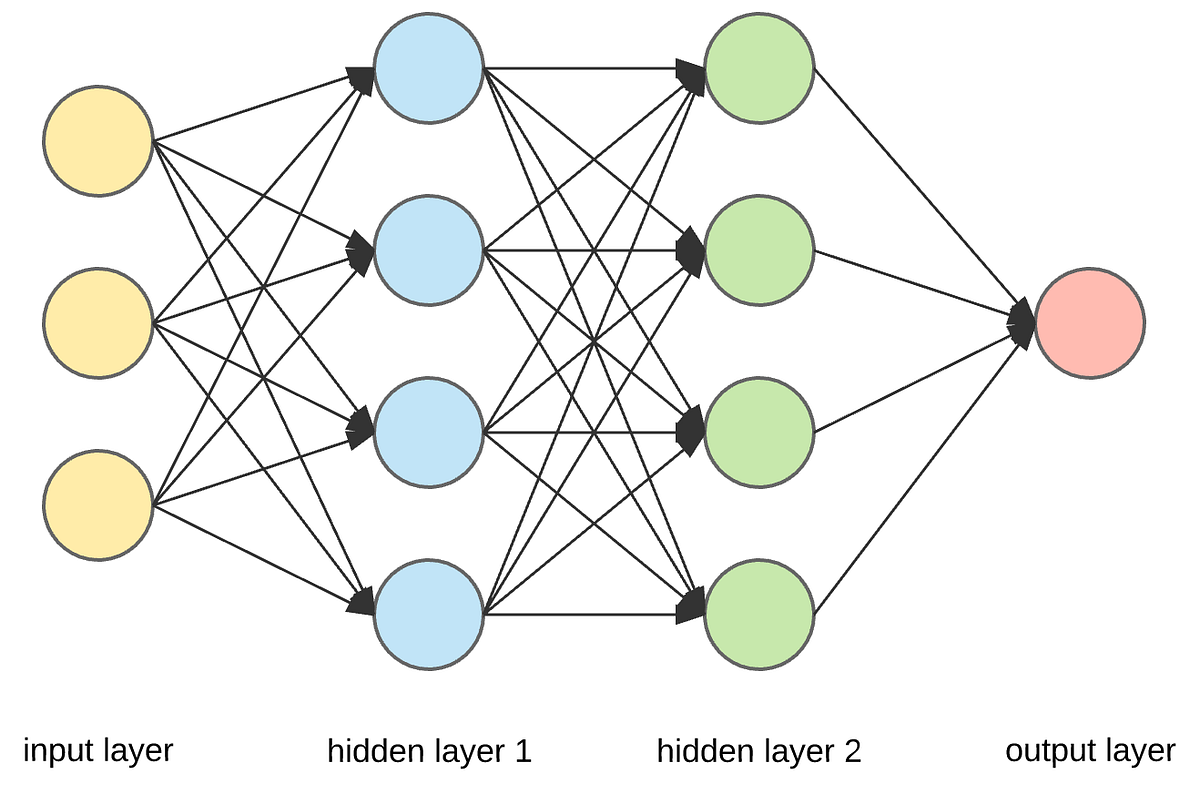

Neural networks repeat both forward and back propagation until the weights are calibrated to accurately predict an output. Next, we’ll walk through a simple example of training a neural network to function as an “Exclusive or” (“XOR”) operation to illustrate each step in the training process. Neural Networks. Artificial neural networks are computational models inspired by biological nervous systems, capable of approximating functions that depend on a large number of inputs. A network is defined by a connectivity structure and a set of weights between interconnected processing units ('neurons'). Artificial neural networks (ANNs) are software implementations of the neuronal structure of our brains. We don’t need to talk about the complex biology of our brain structures, but suffice to say, the brain contains neurons which are kind of like organic switches. These can change their output state depending on the strength of their.

Only 11 players finished 3,000 matches over the course of a four-week period and DeepStack’s neural networks were what allowed it to essentially “learn” and model higher-level concepts while it ran on a gaming laptop (NVIDIA GTX 1080). DeepStack was developed by researchers at the University of Alberta and a number of Czech universities.

DeepStack works through situations as humans would, learning pieces of the game as it goes and create a strategy to defeat the humans.

“In some sense this is probably a lot closer to what humans do,” said Michael Bowling, professor of machine learning and the study author, to Scientific American. “Humans certainly don’t, before they sit down and play, precompute how they’re going to play in every situation. And at the same time, humans can’t reason through all the ways the poker game would play out all the way to the end.”

DeepStack isn’t the only artificial intelligence out there. Carnegie Mellon’s Libratus recently beat four professional players with a more elite status on a supercomputer. Its technology is similar to that of DeepStack in the later stages of computing but it does not use the same neural networks, according to Scientific American. DeepStack also won by larger margins.

Simple Neural Network Example

Past attempts with Claudico didn’t pan out, but Google DeepMind’s Alpha Go beat pros at the game, go. Even more notably, 20 years ago, Deep Blue beat World Chess Champion Garry Kasparov at his own game.

This finding reveals a lot about artificial intelligence’s ability to master imperfect information games beyond abstraction (or computing how to play in every situation before the game begins).

Artificial Neural Network Examples

Lead image courtesy of Thigala shri/Flickr

Neural Network C++ Code Example

Tags

Poker PlayersAI